The European Parliament has given the green light to the world’s initial all-encompassing framework for managing the dangers of artificial intelligence (AI).

There has been a significant surge in the sector, leading to substantial profits while also raising concerns about bias, privacy, and the future of humanity.

The AI Act functions by categorizing products based on risk levels and then adjusting the level of scrutiny accordingly.

According to the law’s creators, it was designed to prioritize a more human-centered approach to technology.

“The AI act is just the beginning of a new era in governance centered on technology,” MEP Dragos Tudorache emphasized.

It positions the EU as a leader in global efforts to tackle the risks linked with AI.

China has already implemented a variety of AI regulations. In October 2023, an executive order was announced by US President Joe Biden mandating AI developers to share data with the government.

However, the EU has taken additional steps.

“The AI Act signifies the start of a new era in AI and its significance cannot be emphasized enough,” stated Enza Iannopollo, principal analyst at Forrester.

“The EU AI Act represents the world’s inaugural and exclusive collection of obligatory measures to address AI risks,” she stated.

According to her, the EU would become the leading global standard for reliable AI, putting other regions, such as the UK, in a position of having to catch up.

In November 2023, the UK organized an AI safety summit but does not intend to implement legislation similar to the AI Act.

Explaining the functionality of the AI Act

The primary focus of the law is to oversee AI in relation to its potential to inflict harm on society. When the level of risk increases, the regulations become more stringent.

AI applications that present a potential threat to basic rights will be prohibited, such as those that handle biometric data.

AI systems that are deemed high-risk, like those utilized in critical infrastructure, education, healthcare, law enforcement, border management, or elections, must adhere to stringent regulations.

Services with low risk, like spam filters, will be subject to minimal regulation, as most services are anticipated to be classified in this group.

The legislation also includes measures to address potential dangers associated with the technology supporting generative AI tools and chatbots like OpenAI’s ChatGPT.

Producers of certain AI systems must disclose the training data and follow EU copyright regulations.

Mr Turodache informed reporters before the vote that copyright provisions had been one of the most heavily influenced parts of the bill.

OpenAI, Stability AI, and Nvidia are facing legal action for their data usage in training generative models.

Some artists, writers, and musicians have raised concerns about the practice of “scraping” vast amounts of data from various sources on the internet, potentially infringing on copyright laws.

The legislation must go through additional stages before it is officially enacted.

Lawyer-linguists are tasked with reviewing and translating laws, while the European Council, made up of EU member state representatives, will also have to approve it, which is likely to be a mere formality.

Meanwhile, businesses will be figuring out how to adhere to the new rules.

Kirsten Rulf, a former advisor to the German government who is now a partner at Boston Consulting Group, has revealed that over 300 firms have reached out to her company.

She shared with the BBC that they are interested in expanding the technology and maximizing the benefits of AI.

Businesses require and desire legal certainty.

More Stories

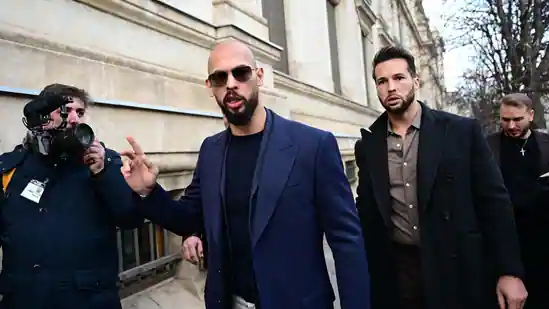

Trial for Rape and Human Trafficking will take Place in Romania for Andrew Tate and his Brother Tristan

A British individual tests the first Customised Melanoma Vaccination

Gaza Baby Delivered from Dead Mother’s Womb Perishes